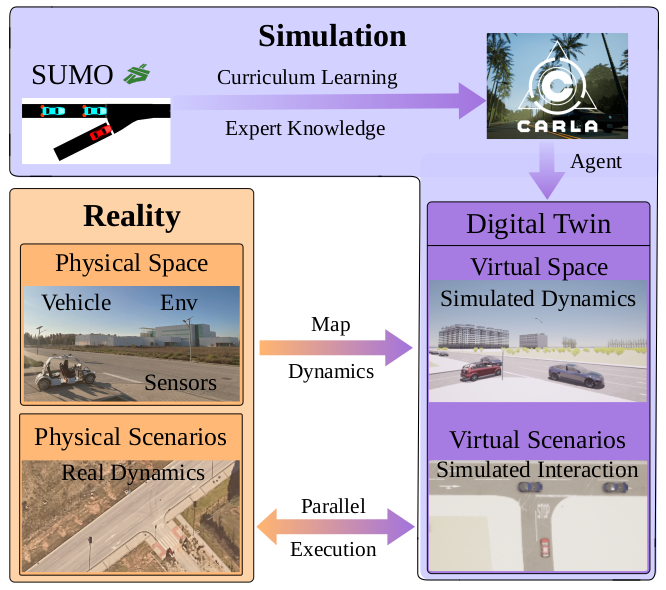

This paper introduces a novel methodology for implementing a practical Decision Making module within an Autonomous Driving Stack, focusing on merge scenarios in urban environments. Our approach leverages Deep Reinforcement Learning and Curriculum Learning, structured into three stages: initial training in a lightweight simulator (SUMO), refinement in a high-fidelity simulation (CARLA) through a Digital Twin, and final validation in real-world scenarios with Parallel Execution. We propose a Partially Observable Markov Decision Process framework and employ the Trust Region Policy Optimization algorithm to train our agent. Our method significantly narrows the gap between simulated training and real-world application, offering a cost-effective and flexible solution for Autonomous Driving development. The paper details the experimental setup and outcomes in each stage, demonstrating the effectiveness of the proposed methodology.

@article{gutierrez2024sim2real,

author = {Gutiérrez-Moreno, Rodrigo and Barea, Rafael and López-Guillén, Elena and Arango, Felipe and Revenga, Pedro and Bergasa, Luis Miguel},

title = {Decision Making for Autonomous Driving Stack: Shortening the Gap from Simulation to Real-World Implementations},

journal = {35th IEEE Intelligent Vehicles Symposium (IV)},

year = {2024},

}